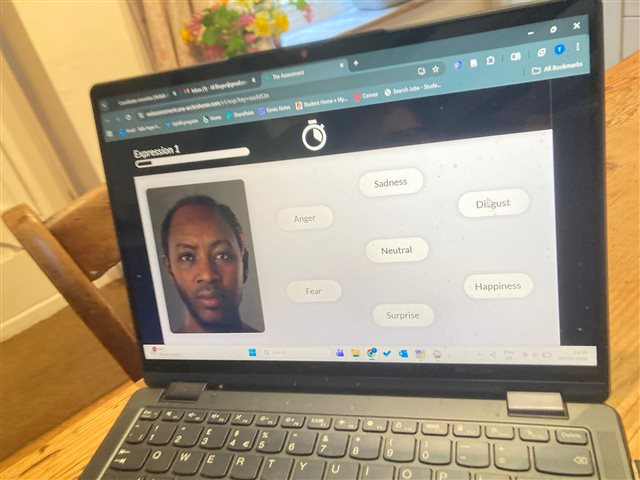

Yes this is after I told them I was autistic (they said they would apply a 'score adjuster' afterwards). Yes it produced the predictable result that I was terrible at it. Here is an example screenshot.

The job had nothing to do with recognising emotions, it was for a technical role not a people focused one. It just seems ridiculous that they can do this so openly? As I feel like naming and shaming today, this was for a WSP job and the psycometric testing was Arctic Shores.